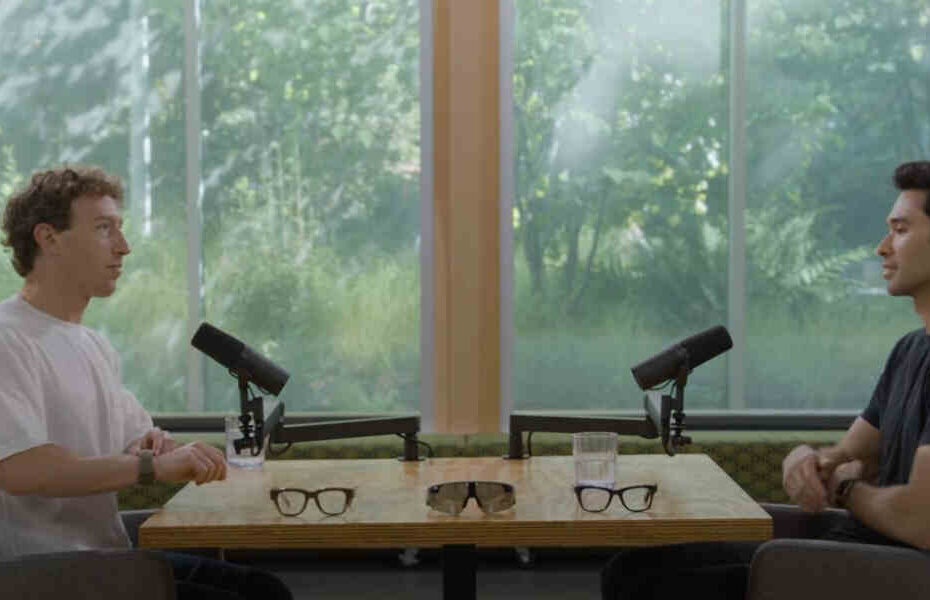

Read the full transcript of Meta founder and CEO Mark Zuckerberg in conversation with host Rowan Cheung on “AI Glasses, Superintelligence, Neural Control, and More”, following the announcements at Connect 2025, September 18, 2025.

INTRODUCTION

ROWAN CHEUNG: Thanks so much for being here.

MARK ZUCKERBERG: Yeah, good to see you. Thanks for doing this.

ROWAN CHEUNG: So today we’re talking everything Meta Connect 2025. Can you give us the rundown of everything you’re announcing and what you’re personally most excited for?

The New Lineup of AI Glasses

MARK ZUCKERBERG: Yeah, so the main things that we announced at Connect were our fall 2025 line of glasses. And you have them here so we can just start and go through them.

I mean, the first one is the next generation of Ray-Ban Meta. That’s sort of the classic AI glasses that we’ve shipped so far. They’re some of the fastest growing consumer electronics of all time. We’re very happy with that. And a lot of the improvements that we have for these are we doubled the battery life, we have 3K video resolution in them now for capture, and we’re introducing new AI features like this thing, Conversation Focus, which allows you, if you’re in a loud place, to basically turn up the volume on friends who you’re talking to. So if you’re in a restaurant or something like that, then that I think is going to be really neat.

Then we’ve got this guy, which is the Oakley Meta Vanguards. This is our second collab with Oakley. It’s more of kind of a performance glasses, but these I think are really cool for a number of things. And they’re designed for a number of things around sports. You’ve got the camera that’s centered, so that’s great for alignment. It’s wider field of view, louder speakers, they’re water resistant. They can connect with your Garmin watch. So you can be running a marathon and basically, you know, you can tell it every mile, capture a video, and then at the end it’ll produce a video for you where it stitches them all together and puts your stats from Garmin on top of it. So I think that’s pretty neat.

The Revolutionary Ray-Ban Meta Display with Neural Control

But I think the most interesting thing by far that we announced is this one, which we call Ray-Ban Meta Display. And that is because it is the first AI glasses that we have shipped that have a high resolution display in them. And the other big breakthrough is that they pair with this guy – the Meta Neural Band, which is the first mainstream neural interface that we’re shipping as the way to control them. And I know that’s pretty neat.

I mean, basically every computing platform has its own new input method, right? So when you went from computers with the keyboard and mouse to phones with touch screens, you kind of got a completely new input method. And the same, I think, is going to be true for glasses and having a neural interface where you can just send signals from your brain with micro muscle movements like this. I mean, this is basically about as much movement as you need to make. And you can enter text neurally. Just all the fun stuff that you got a chance to try out. But I think this is a pretty big breakthrough, so I’m very excited about this. But overall, I mean, the whole lineup is good. So it was a fun Connect.

ROWAN CHEUNG: So you got the Ray-Ban Metas for kind of everyday, the Oakleys for athletes, and then the displays. Now for power users, how do all these glasses kind of tie into that personal superintelligence vision?

Glasses as the Ideal Form Factor for Personal Superintelligence

MARK ZUCKERBERG: Yeah. So I mean, our theory is that glasses are the ideal form factor for personal superintelligence because it’s the only real device that you can have that can see what you see, can hear what you hear, can talk to you throughout the day, and can generate a UI in your vision in real time.

And so there are other devices that people have. I mean, obviously you can have some AI on your phone, you can do some AI on your watch. You can do some of it if you just have AirPods type thing. But I think glasses are going to be the only thing that can do all of the pieces that I just said, kind of visual and audio in and out, and that I think is going to be a really big deal.

ROWAN CHEUNG: So I got to try it for this interview.

MARK ZUCKERBERG: Yeah. And I have to say there were.

ROWAN CHEUNG: Way more many use cases than I initially thought there were going to be. Like the AirPods, for example, have the live translation. But this is live translation. The GPS, the captions, there’s so much more.

MARK ZUCKERBERG: Yeah, the subtitles are awesome.

ROWAN CHEUNG: It’s awesome. So I’m curious, what are your favorite kind of use cases that you’ve been using them for?

Seamless Messaging: The Killer Feature

MARK ZUCKERBERG: I mean, the thing that I really had in mind when we were designing them for is just sending messages. Right. I mean, this is the thing that I think we do the most frequently on our phones. I mean, I would guess that we all are sending dozens of messages a day. I don’t really need to guess. And we run a lot of the messaging apps that people use, so I think we know that people send dozens of messages a day. And we wanted to make it so that the experience is just really great.

So now with the glasses, a friend texts you, shows up in the corner of your eye for a few seconds. It’s off center so it doesn’t block your view. It goes away quickly. It’s not distracting. But if you want, you can just respond as easy as moving your finger like this.

One of my kind of mental models for this is that in person conversations are awesome. The one thing that I like about Zoom is that you can multitask, right? It’s like if we’re having a conversation and you say something, I want to get some context on what you’re saying, or if you remind me that I should go do something or oh, you bring up a person and it’s like, “Oh, yeah, I’ve been meaning to reach out to that person.” If we’re having a live conversation, I’m not just going to do that right then. And then the chances that I’m probably actually going to forget by the end of the conversation, not follow up on most of those things.

On Zoom, I kind of feel like you can just kind of text someone off to the side and that’s fine. And this, I think is going to bring that to physical conversations too, where it’s like, you don’t have to break your presence. You don’t have to be rude, right? You’re not like breaking out a phone. You’re not really, I mean, you can continue to really pay attention to the person. It’s just like a very quick gesture with your wrist.

And I keep on having this phenomenon where there’s like some information I want to have in the middle of a conversation. And normally otherwise I would have had to wait till the conversation and text or call the person to get the information and go back to the person I was having the conversation. Now I just get the information in the middle of the call. I just text the person, information comes in and then it’s like, okay, you can have a much better and more informed conversation. So texting, I think, is really going to be the big thing.

ROWAN CHEUNG: So how does a texting feature work? I know there’s like, you can talk to Meta AI, but you’re saying you can actually write it down and it automatically goes to the phone. Is that…

MARK ZUCKERBERG: Yes. The glasses.

ROWAN CHEUNG: Okay, to the glasses, yeah.

MARK ZUCKERBERG: With the neural band.

ROWAN CHEUNG: So like you just really. Just handwriting?

How Neural Text Input Works

MARK ZUCKERBERG: Yeah. I mean, you can kind of think about it like as if you’re holding a small pencil and then what, like what motion you would make to write out certain letters. But then what’s going to happen is that the neural band is going to be personalized to you over time. So it’s going to learn. Okay, how do you make a W or a J differently from someone else? And it will learn and you will kind of co-evolve with it to like, it’ll learn what the most minimal version of you making a J is or an O or whatever, and then the feedback loop around that.

Well, you’ll just be able to make like increasingly subtle movements over time where it’s not actually picking up motion, it’s picking up your muscles firing. So over time, I think you’re basically going to be able to just move your muscles in opposition to each other, not even really move your hand and be able to send messages or control a UI with your hand at your side in a jacket pocket behind your back. It’s not hand recognition. It’s not the cameras on the glasses looking at what your hand is doing. It’s this band picking up micro signals that your brain is sending to your muscles before they even move. So you don’t actually have to move. You just need to be able to send the signals to it and it picks it up.

ROWAN CHEUNG: Yeah, so the wristband reads the electrical signals before you even move.

MARK ZUCKERBERG: Yeah.

ROWAN CHEUNG: Why is that important over just like adding buttons to the glasses or like improving the voice commands?

The Advantage of Neural Control Over Traditional Inputs

MARK ZUCKERBERG: Well, I mean, okay, for voice. A lot of the time you’re around other people, right? So like, I just think that you want a way to control your computing device that is private and discreet and subtle. And one of the things that we thought about was, okay, can we get whispering to work? Because by the way, voice does work too, right? You can control the stuff with voice. You can do voice dictation, you can control. Talk to Meta AI with voice, but we just, I just thought that there needed to be a way on top of that that wasn’t voice for when you want to do it more subtly and privately.

We could be having this conversation. I could have the glasses on and I get a message. It comes in and okay, we’re talking and I’m writing it and I’ve sent. It’s done in that time. I could have sent a 10 word message and that would be fine.

ROWAN CHEUNG: That’s so crazy.

MARK ZUCKERBERG: And then it took. All right, now I got my response back in and, and ok, here maybe I’m writing another response and whatever. Yeah, ok, done. So I think that that’s going to be, I think it’s going to be awesome.

And then in terms of buttons. Yeah, that works too. I mean you can take a photo by tapping the button on any of these. You can hold it down to take a video, you can skip to the next track with the swipe, or you can start or stop music by tapping on it. But I think the point is you don’t necessarily want that to be the only way that you could do it. Like now with the neural band, I can also just be like, I don’t know, start and stop music with it. Put my hand on my side. It’s like, all right. It’s a very subtle gesture. You can’t even tell, right?

The Future of Discreet Computing

ROWAN CHEUNG: We were joking in the demo room that it’s almost like right now when you go to classes, all the teachers are like, “Oh, put your phones down.” They can see when students are just checking their phones, distracted. But now it’s going to be like, “Hey, keep your thumbs out almost.”

MARK ZUCKERBERG: Because it’s like, I think it’ll be.

ROWAN CHEUNG: “Take your glasses off or take your…”

MARK ZUCKERBERG: “Glasses off and show your thumbs.”

ROWAN CHEUNG: Maybe both. Yeah, but yeah, so looking ahead, I guess what you’re saying is like, typing and speaking might not be enough to give enough data to AI.

MARK ZUCKERBERG: What do you mean typing and speaking might not be enough to give?

ROWAN CHEUNG: Well, now we have electrical signals we can also give to the.

MARK ZUCKERBERG: Yeah, for the UI. Yeah, for controlling the UI.

ROWAN CHEUNG: Do you think, like, the neural band is almost like a new interface to interact with AI?

Neural Band Controls and User Experience

MARK ZUCKERBERG: Yeah, I mean, I think it’s AI and it’s the glasses. I mean, there’s some parts of the glasses that are like, okay, you just have a menu of your apps and you basically can just swipe through it very subtly with your hand. And it’s like, okay, now I’ve selected. Okay, now I’ve picked up that. You know, it’s. I mean, this is basically what the interaction looks like is like, effectively. It’s like, okay, swipe a couple of times. I brought up the camera. Oh, now I have a viewfinder so I can see exactly what the image is. I want to zoom in. I just turn my finger a little bit.

Actually, that’s one of the coolest, is when you’re playing music, the way that you change the volume is you just pretend that there’s a volume dial and you just turn your wrist and it’s like. It just feels like magic. It’s like, really fun the way you. You can bring up meta AI anywhere. You just tap twice. It’s like, but, but it’s like. But I mean, tiny, right? It’s like it could be your side. Like, no one would even notice.

So, yeah, no, I think it’s. I think this is just going to be by far the most intuitive way. But if you don’t have it. Yeah, you can control them with voice, you can control them with the buttons. I mean, different ways to do it. Yes. It’s going to be both the AI and the UI of glasses and potentially over time, other things beyond glasses, I think that you’ll be able to control with the neural band.

ROWAN CHEUNG: That’s really fascinating. How long did it take you to pick up the controls for the neural band?

MARK ZUCKERBERG: Yeah, for the neural band, not that long. I mean, it’s pretty intuitive. I mean, the way that you kind of think about it is navigating. It’s kind of like a D pad. You kind of swipe your thumb right, left, up, down. You bring up the menu by kind of, you know, you can tap twice. You bring up meta AI by twice tapping twice. You type as if you have a. Or enter text as if you have a pencil.

The trick is that when you’re learning up front, the motions are a little bit bigger. And then very quickly, within a few days of using it, you get to like very subtle movements. And the reality is that a lot of the optimization isn’t going to be on our side or your side. It’s going to be the machine learning system over time getting personalized to you and getting better.

And the times when the neural text entry is slow, it’s because I make a mistake and the autocorrect doesn’t fix it. So now I have to go back manually and re enter the text. But this is just like standard machine learning stuff. As this just gets better and better and learns your patterns better, it’s going to be able to auto complete more of what you’re doing. So you won’t even have to make all the motions.

The Evolution of Smart Glasses

ROWAN CHEUNG: So you’ve been calling glasses kind of the next platform for years. But the original Ray Ban metas really took off with just audio and AI. What did you guys stumble on there?

MARK ZUCKERBERG: So we have a few principles in building glasses and the three principles that we talk about the most are they have to be great glasses first. Right. So you have to think about, okay, for any of the. Before you even get to any of the technology, if you’re going to wear these on your face all the time, they need to be good looking, they need to be light.

And these are, I think, a lot of people who are designing technology, they make something that’s kind of bulky and they add a bunch of cool functionality to it. And they’re like, “Why don’t people want to wear this?” It’s like, well, most of the day people aren’t taking photos or something. I mean, most of the day it’s just there. And the magic of these is that they’re good looking, comfortable glasses that you want to wear and then you have them on your face. So when you do want to listen to music or call AI or whatever, it’s available to do that. So good looking glasses first.

The other big principle is that the technology needs to get out of the way. Right. So I think a lot of people when they’re designing technology, they, you know, they have this impulse to kind of add a lot of flourishes and make it so that the thing that they’ve built is kind of center stage. And one of the things I keep pushing back on with our team is. No, actually look, especially if you’re building glasses, it is extremely important that whatever technology you have, it is there when you want to use it. And then it gets out of the way you don’t want random stuff in your vision.

It’s like, I don’t care how cool of an animation you made on the design team. It’s like, people, we want this to be super minimal, right? A message comes in, you see the message. It goes away when you don’t want it. That I think the classic design just distilled those principles. So, yeah, I think it was kind of a hit for those reasons.

The third principle, for what it’s worth, is take superintelligence seriously. So it’s kind of a mantra internally that we have, which is this idea that AI is going to be the most important technology in our lives. And we want to make sure that AI is in service of people, not just like something that sits in data centers and automates stuff.

And so we designed these, even the early version, the first version of this, to be able to just have easy software updates, to have improvements like the conversation focus thing that we talked about. So that way you can get, like, you have this great accessibility feature. You’re in a loud place, you can turn up the volume on a person, right? It’s like kind of crazy, but it’s like, okay, so you build in the sensors first that you think that the AI is going to need over the next couple of years, and then you make it so as you get the software working, you can just update it. So that way you can bring the latest AI technology to people, make them smarter with a software update.

Replacing the Phone

ROWAN CHEUNG: Well, I’m a big fan. Personal question. How far away do you think we are from these glasses being so good? You just replace your phone?

MARK ZUCKERBERG: So I think about it a little bit differently. I think about what is your main computing device right now? Phones are your main computing device. It’s my main computing device for sure. But I didn’t get rid of my computer, right? I have my computer, but I just use it less. Even a lot of the time, I’m even at my desk and my computer’s right there. But if I want to go do something, I just take out my phone because it’s. That’s my primary thing that I do.

So what I think is going to happen here is that I don’t think we’re going to get rid of our phones anytime soon. I just think our phone is going to stay in our pocket more or in our bag. And even very subtle things like, I don’t know how many times a day I used to look at my phone to see what time it was. Now I don’t do that. When I have the glasses. You just tap quickly. The clock comes up. Done. It’s like, okay, I don’t need to take out my phone to look at the time. Okay, now I don’t need to take out my phone to look at messages. Right.

It’s like, okay, now with these, the camera’s great, but now with the viewfinder, I know exactly what I’m capturing. So I think you just go kind of use case by use case and all these things I would have used to have taken out my phone for, I now don’t. I think that’s sort of the way it’s going to go. But I would be surprised if we kind of get rid of our phones anytime in the next five years. I just think it’s going to be less and less use slowly using your phone less.

ROWAN CHEUNG: Yeah, I mean the GPS feature is another one. Instead of having to look at my phone constantly, I’m going to use this like all the time. Just have it in my glasses and navigating me where I need to go with lines. Translation even was useful. There’s a lot. I’m curious to see how other people are going to be using it. But I think another thing people are going to be really curious about is the metaverse. How much of the vision is still distilled in these products?

The Metaverse Vision

MARK ZUCKERBERG: Well, I think we’re getting closer to it. Right. So the metaverse is obviously it’s a very visual experience, presence. There are some parts of it like stereo audio, which I mean you can 3D audio, which you can get, that is great. But we’re starting to get the display and then the Orion prototype that we showed last year, it’s a wider field of view. It’ll be a more expensive product when it’s ready.

But I think you’re basically going to get to. This is one where you can have a hologram, you can kind of have context. It’s enough to kind of watch a video or have a message thread. But. But this product, especially because it’s monocular, it’s not putting 3D objects in the world. Right. So there still are things around delivering this sense of presence like you’re there with another person that I think are going to be really magical.

And when we get the consumer version of Orion, you’ll really be able to start doing that. So I think we’re kind of inching our way there. But this is a big breakthrough, having the first kind of high resolution display with the neural band. And it’s going to be a good step for learning how that goes. But yeah, I think the vision is that all of the kind of immersive software around presence that we have for VR, we would like a version of that to run on glasses too.

The Vanguards for Athletes

ROWAN CHEUNG: I want to switch gears to the Vanguards. I love the design. I recreationally go on runs and cycles, so I, I am using them as well. How did you build these with athletes in mind?

MARK ZUCKERBERG: Yeah, yeah, they’re fun, really stylish. Well, I think a lot of people on the team are pretty intense athletes and some of it is we just use the Ray Bans and I can’t tell you how many pairs of Ray Bans I’ve fried taking them surfing, but it was worth it. But they’re not water resistant. So I mean, it’s pretty obvious. Like you’re like, okay, we want something that’s water resistant.

The extra sound, the kind of power in the speakers, these are 6 decibels louder. Is really valuable and helpful when you’re doing loud things. So if you’re cycling at 30 miles an hour and it’s windy. The other day, a few weeks back, I was taking a call on a Jet Ski and I could hear the person completely fine over the engine of the Jet Ski.

And, and we have this advanced noise reduction in the background. So we’ve designed these. It’s got the microphone in the nose pad, which basically means that you could be in a wind tunnel and the other person on the other end of a phone call would not be able to hear any of the background noise. You just come in crystal clear. So I think stuff like that is awesome.

Then pairing it with. There’s all this other stuff that I think you want for sports. The video stabilization is good. The pairing with Garmin and Strava I think is good. We put an LED in the corner so you can have it light up when you’re get to give you a reminder if you’re to, to make sure you stay on pace that you want or in your target heart rate zone. So, yeah, I mean there’s a lot of, there’s a lot of things like that that I think are going to be, are going to be pretty neat for athletes. Yeah, it’s fascinating.

ROWAN CHEUNG: Why do you think it was important to design so many different glasses for different lifestyles? And how much broader do you really expect to go from here?

Different Styles and Aesthetics for AI Glasses

MARK ZUCKERBERG: Well, I mean, I think people have different styles. This isn’t like a phone where everyone’s going to be okay having the same thing and just like, okay, maybe I have a slightly different color case, or I put a different sticker on my case and then I’m good.

I think people have different face shapes, they have different aesthetics. I mean, people wear different kinds of clothes. I think this is like an important part of our identity and sense of style. And some of it is functional, too. Some of it is a little more lifestyle, some of it is more active.

Part of this is some people prefer thinner frames, some people prefer thicker frames. You will always be able to fit more technology in thicker frames. So as we get to more display, more advanced holograms, it will always be a little bit thicker of a frame than what you can do for the thinner one.

So I think that there’s a choice that people will make around, “Okay, do I prefer the aesthetics and the simplicity of the thinner ones, or do I like the aesthetics of the thick ones?” Fortunately, big glasses are in style, so that works. There’s the whole spectrum and then there’s also the price point and affordability. Less technology, you’re always going to be able to offer for a more affordable price. And that’s going to be great too, because we want to make it so that there’s more than a billion people – it’s somewhere between 1 and 2 billion people in the world who have glasses for vision correction today.

I don’t know, I think within five to seven years, is there any world where those aren’t all replaced with AI glasses? And it feels to me like it’s kind of like the iPhone came out and everyone had flip phones, and it’s just a matter of time before they’re all smartphones. So I think that’s going to be the case with glasses, too. But people have different glasses and they want different glasses and they want different glasses at different price points and styles. So our goal is to just work with a lot of iconic brands to do some great work.

Real-Time Fitness Integration and Coaching

ROWAN CHEUNG: So on the vanguard, you mentioned the Garmin integration. I thought this was fascinating because this basically allows AI to see your heart rate, pace, location, everything, all hands free while you’re running or cycling. How long do you think it will be before we have a personalized coach that’s there, proactively in your ear?

MARK ZUCKERBERG: Yeah, I mean, it can do that a bit. So I think for running, for example, if you want to ask it what your heart rate is or what your pace is, it can answer now. I mean, I don’t know how hard you run, but when I’m running for performance, I don’t really want to talk to something. You’re probably above that heart rate zone, but there are times when I’m skiing really fast and I want to know how fast I’m going, because that’s kind of fun and you’re not necessarily out of breath doing that.

Yeah, I mean, part of it is being able to talk to it and it can give you the stats in real time. That’s one of the reasons why we built the LED in – we want to make it so that you just have a very simple visual indicator of “Okay, am I on my pace target? Am I on my heart rate target?” But I don’t know, you can get a sense of over time. It’s probably going to be pretty good to have a display in those two.

ROWAN CHEUNG: I mean, the real time coaching as well. So for an example, if I’m running and I want to stay in zone two, which is below a certain heart rate, but I don’t want to go too hard because I want to stay in this zone. And I guess my question is, will I be able to have a coach that’s telling me, “Oh, hey, slow down a little bit, you’re a little too fast”?

MARK ZUCKERBERG: That’s the goal of the LED to start. And if you’re trying to stay in zone two, you probably can talk to it. Because, I mean, that’s kind of the definition of zone two. It’s like, you should be able to. So I don’t know if it’s the definition, but it’s a property of zone two. So you can basically ask it different stats and it knows if you’re connected to Garmin.

ROWAN CHEUNG: So I know you’ve been training a lot. Have you found any good use of it when you’re training as almost like a coach?

MARK ZUCKERBERG: Well, I take them surfing and they don’t break, which is nice.

ROWAN CHEUNG: There we go.

MARK ZUCKERBERG: So, because the Ray Bans are good, but like I said before, I’ve fried a few pairs of those.

ROWAN CHEUNG: And they capture moments in real time, too, right?

MARK ZUCKERBERG: Yeah. I mean, one of the nice things about this is because the camera is centered. It’s really perfectly aligned. I mean, the image really, in the video feels like it’s coming exactly from your perspective. It’s nice even when the camera’s off to the side. But it’s like these things about delivering a sense of presence. They have this feeling where when you get it to be perfect, it really feels like it clicks. And if you’re 2 degrees away, it’s still pretty valuable, but it’s not. But there’s something about having it in the middle that I think is really quite compelling in wide field of view.

ROWAN CHEUNG: I’m excited to try them out.

MARK ZUCKERBERG: Yeah.

Raising Kids in the Age of AI

ROWAN CHEUNG: So you’re raising three kids while building superintelligence. I’m curious what conversations you’re having right now about what to grow up in.

MARK ZUCKERBERG: Well, I mean, it comes up in a bunch of different things, but in our family, we build a lot of robots. I’m really into 3D printing. I think that’s a really fun DIY hobby. My daughters are really into it. They like making stuff.

Kind of got to the point where I’m like, “All right, you need to design your own stuff. I’m not just going to print stuff that we find on the Internet.” So now they’re using all the different tools to do that, and I work with them on that. It’s a fun hobby, but in a bunch of ways, I think of robotics as the thing after you get AI. I mean, obviously they’ll develop contemporaneously to some degree, but I think we’re going to have really smart, intellectual AI, and then you get the robotics, which is going to be the fully embodied version of it.

So, yeah, I mean, there’s not that much that the kids can do with developing AI at their age, but you can actually – they can, and they just enjoy making stuff. So, I mean, kids love 3D printing, and it’s not very complicated, and it’s just a fun hobby.

And so, yes, we have this project going on right now. First, I just ordered a bunch of robots from the Internet that you could assemble, because there’s a bunch of starter packs and things like that, and I love that. And then the next one is basically designing our own. And basically just you 3D print the shell, you have the – whatever. You could probably do a version with the Raspberry Pi or the Jetson and just get that to work pretty well for running language models and doing interesting stuff with it.

ROWAN CHEUNG: Yeah, that’s a step up over Legos, that’s for sure.

MARK ZUCKERBERG: Yeah, yeah, no, that’s true. It’s nice because Legos are – you run out of blocks. That’s true, but with the Internet, you don’t run out.

Teaching Values and Learning How to Learn

ROWAN CHEUNG: Are there any specific traits or skills you’re trying to teach your kids? So, for example, Demis Hassabis, he said learning how to learn is a really important trait. Is there anything you’re thinking of teaching?

MARK ZUCKERBERG: I mean, I mostly try to teach our kids to be good people. I mean, I guess there’s the intellectual stuff that you’re talking about. It’s like, what’s interesting, robots, whatever. But I don’t know, I mean, I think being caring and kind and things like that I think are really important. So just a bunch of values type things like that.

But yeah, I mean, I agree with Demis’ answer too. It’s less about learning a specific thing and more about learning how to go deep on a thing. So I’m a big believer that – so a lot of people say some variant of “Oh, yeah, you want to learn how to learn,” but okay, but how do you do that?

And I guess my theory on how you do that is basically a very depth first approach. So rather than just trying to have some conceptual framework, you complete a project. So you will learn a lot by building a robot. Whether you actually care about robotics after that is completely incidental. You took a problem. We decomposed into all these things. We needed to figure out the design for the 3D printing, and she needed to get the printers to work. And then we needed to get the raspberry Pi to work. And it’s like, “Okay, we’re learning a lot about wheels right now.” It’s like, “Okay, we’re going to do treads. Are we going to do wheels? How many wheels? How do you want…”

And that stuff, I think you basically – it’s like you’re learning how to decompose problems, how to debug things. I think that you just learn by doing. So that’s my philosophy on it. And I think just doing stuff where you have common interests. So, yeah, you know, sometimes we build robots, sometimes we watch K Pop Demon Hunters. You got to have fun.

Preserving Human Traits in the Age of Superintelligence

ROWAN CHEUNG: Okay? So when we do achieve superintelligence, these AI glasses are going to see everything we see, hear everything we hear. And now even some of our muscle impulses. What human traits should we fight to preserve and what should we just let go?

MARK ZUCKERBERG: Well, I’m not sure I agree that they’re going to see everything or maybe there will be some sensor that does, but they’re not necessarily going to retain it. I’m not sure that you want them to, but so that’s a whole separate direction that we could go into. But I think just being able to pick out the salient bits are important and giving people control over that is important, but that’s not really the core of what you’re asking.

So I think, to me, creativity is very important and having a sense of “Here’s what you want to make in the world” and having an idea of how you – I mean, it’s some of the things that we just talked about – how do you decompose that? What are the steps to do that? What are the tools that are going to be most helpful in doing that? I think is part of the job of a creator or a builder is to be a master of the tools that are available to them. If you’re not, then you’re not going to be at the edge of your work. You know, it’s a competitive world.

So, yeah, I think all of the AI progress, I think, has been very fascinating because people have sort of conflated intelligence with an intent or a desire to do something. And I think part of what we’ve seen so far from the AI systems is that you can actually separate those two things. The AI system has no impulse or desire to create something. It just sits there. It waits for you to give it directions, then it can go off and do a bunch of work.

And so I think the human piece at the end is going to be, “Well, what do we want to do to each make the world better?” And I think some of that will be around a personal creative manifestation of what you want to build. But I do think some of it, and I think in our industry, we probably underplay this a bit, is just caring about other people and taking care of people and spreading kindness. So I think that stuff is really important too, and I think AI will help with that as well.

The Superintelligence Labs Push

ROWAN CHEUNG: So this conversation wouldn’t be without, obviously, the superintelligence labs that you’ve been on a spree over the last couple past months and all over the news.

MARK ZUCKERBERG: Yeah, it’s been busy. Yeah.

ROWAN CHEUNG: When did you decide to make that change from the outside, there are rumors that you were personally going founder mode, calling researchers, emailing all that stuff. What drove the decision for this?

Building Meta’s AI Lab: Talent Density and Research Principles

MARK ZUCKERBERG: Yeah, I think, I mean, it was largely. Llama 4 was not on the trajectory that I thought it needed to be on. Llama 4 was in many ways a big improvement over Llama 3. But we weren’t trying to be a bit better than Llama 3, right. We were basically trying. We want to build like we’re a frontier lab, right. That wants to be doing leading work.

I kind of felt like I’d learned enough about how to set up a lab that I wanted to kind of reformulate the work that we were doing with a few basic principles. So one is huge focus on talent density. So you don’t need many, many hundreds of people. You need 50 to 100 people, a group science project who can basically keep the whole thing in their head at once. Seats on the boat are precious. Right. It’s like if someone is not pulling their weight on that, then that’s like it has this huge negative effect in the way that it doesn’t for a lot of other parts of the company.

So I think just this absolute focus on having the highest talent density in the industry drove a lot of what you heard about. Okay, well, why am I going out and meeting all of the top AI researchers? It’s like, well, I want to know who the top AI researchers are and I want to personally have relationships with them and I want to build the strongest team that we can. So that was a big part of the focus.

There are other parts too. When you’re doing long term research, one of the principles that we have for the lab is we actually don’t have deadlines that are top down. Right. It’s research. Right. You don’t know how long the thing is going to take. All the researchers, everyone’s competitive. They all want to kind of be at the frontier and doing leading work. So, me setting a deadline for them isn’t going to help. That doesn’t help them at all. Right. It’s like they’re moving as fast as they can to try to understand what we need to solve the problem.

So we organized the lab to be very flat. We don’t want layers of management that are not technical. Right. So the issue is people start off technical and then they go into management and then six months or a year later you still think you’re technical, but you actually haven’t been doing the stuff for a while. So the knowledge just kind of slowly decays or quickly decays in an environment that’s moving as quickly as this.

So anyway, those are the basic principles. But I think that overall, taking a step back, I think that AI is going to be the most important technology in our lives, in our lifetimes. Building the capacity to build absolutely leading models I think is going to be a really critical and very valuable thing for unlocking creativity, building a lot of awesome things. So yeah, I’m absolutely just going to focus and do the things that we need to do in order to make sure that we can continue to do that.

ROWAN CHEUNG: How hands on are you still with the lab?

Zuckerberg’s Direct Involvement in AI Research

MARK ZUCKERBERG: Well, I mean, the lab, I moved everyone around me who sat with me and now the lab is there. So I mean I’m pretty hands on. It’s like, I mean, Shengjie, the chief scientist sits right next to me, so. And a lot of the other teams sit within 15 feet of me.

So what would I say? I mean, look, I’m not an AI researcher, so it’s not like I’m in there telling them what research ideas to do. The things that I can do as CEO of the company are I can make sure that we have the very best people and talent density. I can make sure that we have by far the highest compute per researcher and that we do whatever it takes to go build out all that capacity.

You probably saw the announcement that we had. We’re building this Prometheus cluster which is, I think it’s going to be the first kind of gigawatt plus single cluster that’s like a contiguous cluster for training coming online next year. And we’re building a cluster in Louisiana that is, it’s going to be 5 gigawatts and we’re building several more clusters like that that are just going to be multiple gigawatts.

So yeah, so I mean, and that obviously takes some conviction. I mean we’re talking about many hundreds of billions of dollars of capital. So you both need to have a good business model that can support it and you need to believe in it.

And then there’s all these other parts of running a company where, I don’t know, I mean big companies have positives and negatives and it’s sort of my job to make sure that we channel all the positives that we can towards this effort and that can clear as many of the kind of things that would otherwise be annoying about running a company out of the way.

So, when we build something awesome, we’ll get it in our apps and get it to billions of people quickly. That’s a good thing that you can do inside a big company and then whatever the other kind of things that would be more annoying, it’s sort of my job to clear them out of the way.

So how do I do that? Well, I want to make sure that I know the researchers well. Right. And that they, that when they have an issue that they feel comfortable texting me or just walking over to my desk. And so I think that that’s actually part of running the whole thing. Well, is that they kind of I have that rapport with them and in that way I can be in the information flow to understand what the issues are that they’re having so I can go solve them for them.

ROWAN CHEUNG: So it’s like building the culture of everything goes open, everything moves fast. And you’re personally talking with all these researchers?

MARK ZUCKERBERG: Yeah, I mean, I’m doing other stuff for the company too, obviously, but yeah, and, but yes, I’m just trying to make sure that we move as quickly as we can to get the researchers what they need to do the best research in the industry.

The Future of Superintelligence in Meta’s Products

ROWAN CHEUNG: Last question. If we do achieve superintelligence and whenever that is, what are the plans to integrate it into Meta’s existing products?

MARK ZUCKERBERG: Yeah, I think when you have superintelligence, the nature of what a product is will change pretty fundamentally. If you think about our products today, a lot of the big ones. So if you look at Instagram or Facebook or our business model around ads, they’re basically already these very large transformer systems. They’re recommendation systems, not language models today. But they’re basically already these big massive scale machine learning systems.

So I mean, in the future, do you think we’ll get to a point where instead of having it be a recommendation system that has some kind of basic understanding of what you might care about and therefore can kind of spit out an ID for a story and then you have the app that renders it. I just think you’ll have models that you’ll interact with directly that, that it’ll recommend content, it’ll generate content, you’ll be able to talk to it. Right. It’s just so I think this stuff will all be much more integrated.

And then I think that sort of culminates in the glasses vision, where I think what you’re going to get is eventually this always on experience that you can control when it’s on and off, but it can be always on if you want, where you can just let it see what you see, hear what you hear. It can go off and think about the context of your conversations and come back with, with more context or knowledge that it thinks you should have. When you need an app, it can just generate the UI from scratch for you in your vision.

I’m not sure how long it’s going to take to get to that. I don’t think this is five years. I think it’s going to be quicker. So two, three. It’s hard to exactly know, but. But I don’t know. I would guess it’s. Every time I think of what a milestone would be in AI, they all seem to get achieved sooner than we think. So I think, my optimism about AI has generally only increased as time has gone on in terms of both the timeline for achieving it and how awesome it’s going to be.

Related Posts

- Anthropic CEO Dario Amodei on Pentagon Feud (Transcript)

- Elon Musk on CyberCab, FSD and Optimus @ Brighter with Herbert (Transcript)

- Alexandr Wang’s Remarks @ AI Impact Summit (Transcript)

- Demis Hassabis On AGI, Advice For Indian Engineers, AI In Gaming & More (Transcript)

- Transcript: Google CEO Sundar Pichai Speaks @India AI Summit